AI deepfakes are endangering democracy. Here are 4 ways to fight back

NEWYou can now listen to Fox News articles!

With the recent explosion of AI, dazzling images, videos, audio and texts can now be easily generated by anyone with just a few simple inputs. While this technology offers many astonishing benefits, it also poses significant dangers.

Among the most pernicious of these is the creation of deepfakes – highly realistic yet manipulated or fabricated content that falsely depicts real people doing or saying things they never did. Our ability to discern fact from fiction, along with democracy itself, are in the crosshairs.

In recent months, deepfakes have entered the mainstream like never before. In February, ads on Facebook and Instagram were discovered using AI videos falsely depicting Piers Morgan, Oprah Winfrey, and other celebrities endorsing pseudo-scientific self-help courses.

In January, Taylor Swift was the victim of deepfake pornography, as fake explicit images of the pop star flooded Twitter/X, garnering millions of views.

AI-GENERATED PORN, INCLUDING CELEBRITY FAKE NUDES, PERSIST ON ETSY AS DEEPFAKE LAWS ‘LAG BEHIND’

A study reported by MIT Technology found that 96% of deepfake videos online were pornographic and nonconsensual, nearly all targeting women.

Celebrities are far from the only victims. Regular people, especially women and girls, are increasingly targeted. A study reported by MIT Technology found that 96% of deepfake videos online were pornographic and nonconsensual, nearly all targeting women.

NBC News recently reported that middle school students in Beverly Hills, California, were found creating and circulating deepfake nude photos of their classmates. Similar incidents are occurring in high schools across the country.

As this tech rapidly improves, Oren Etzioni, a computer science professor at the University of Washington who researches deepfake detection, said, “We are going to see a tsunami of these AI-generated explicit images.”

Deepfakes are putting democracy itself at risk. The U.S., U.K. and about 70 nations encompassing almost half the global population have national elections this year.

These will be the first elections in which sophisticated deepfake tech is readily accessible not just to government entities and nefarious actors, but to anyone in the world with a phone or laptop.

We’ve seen previews of deepfake interference in the political arena. A viral deepfake video in 2022 falsely depicted Ukrainian President Volodymyr Zelenskyy proclaiming to surrender. In 2023, AI-generated videos promoting the Chinese Communist Party were shared by pro-China bot accounts across Facebook and Twitter/X.

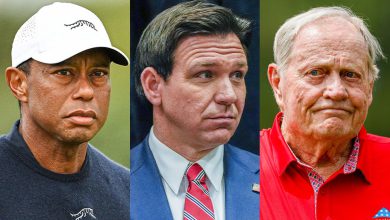

Here at home, the U.S. presidential election is already being disrupted. In January, AI-generated images shared on social media falsely depicted former President Trump with young girls on Jeffrey Epstein’s plane.

Last month, days before New Hampshire’s presidential primary, thousands of calls were made, dissuading recipients from voting with the message, “Your vote makes a difference in November, not this Tuesday.” This message, mimicking President Biden’s voice, was generated by AI. The perpetrator who created the fake audio said it took him just 20 minutes and cost $1.

HOW DEEPFAKES ARE ON THE VERGE OF DESTROYING POLITICAL ACCOUNTABILITY

Nina Jankowicz, former executive director of the Homeland Security’s disinformation task force, recently warned that the Russian government has employed deepfake pornography to tarnish the reputations of female politicians in Ukraine and Georgia. She warned that similar strategies are likely to be deployed against female leaders in the West.

Deepfakes also undermine trust in authentic media. Renée DiResta, from the Stanford Internet Observatory, highlighted how claims of AI are now used to discredit legitimate content, citing denials of real videos of Hamas’ attacks on Oct. 7 as an example.

So far, remedies are either nonexistent or simply not effective. Several states have enacted patchworks of laws mandating the labeling of deepfakes or prohibiting those that falsely depict candidates. A few federal bills have been proposed, but nothing has been enacted.

Social media giants including Meta and YouTube have implemented rules against manipulated media that’s deliberately misleading, including deepfakes. The effectiveness of these policies has been far from perfect. Often, by the time these deepfakes are reported, they have already reached millions of users. In early February, Meta’s own oversight board criticized the company’s regulations as “incoherent.”

WISCONSIN LEGISLATURE PASSES LAWS RESTRICTING AI-PRODUCED DEEPFAKE CAMPAIGN MATERIALS

The surge in AI deepfakes comes at a time when many social media companies, particularly Twitter/X, are rolling back efforts to moderate controversial content, especially related to politics. Katie Harbath, a former public policy director at Facebook, noted that companies increasingly want to avoid controversy for over-moderating, stating, “A lot of them have been more like, ‘It’s probably better for us to be as hands-off as possible.’”

What can be done right now? Where do we start?

First, helpful AI can be used as a tool against harmful AI. It’s a learning mechanism. AI can be instructed to become a mastermind detector of deepfakes, identifying subtle patterns humans might not notice.

Recently, some large social media and AI companies including Meta, Google, and OpenAI have begun partnering to watermark and label AI-generated content. This kind of cross-platform collaboration is critical and must be strengthened and expanded.

PENTAGON TURNS TO AI TO HELP DETECT DEEPFAKES

Second, the prospect of legal penalties and fines for disseminating deepfakes would serve as a significant deterrent. The vast majority of Americans are in favor of federal measures, with 84% supporting legislation that would outlaw non-consensual deepfake pornography, according to the Artificial Intelligence Policy Institute.

There must be strong federal laws established that explicitly protect victims of deepfakes. Even preliminary federal rules and fines could significantly reduce the spread.

Third, social media companies must be held accountable for promoting deepfake content. Currently, Section 230 shields Web companies from liability for user-generated content.

CLICK HERE FOR MORE FOX NEWS OPINION

An effective reform would be to hold companies liable for deepfake content that they play an active role in spreading. This includes via targeted ads or algorithmic boosting, where the content is served to users who otherwise wouldn’t have seen it.

Currently, the large social networks benefit from controversial deepfake content going viral. It increases user engagement and ad revenue, which leads to laissez-faire enforcement.

Fourth, improved media literacy is urgently needed. Studies show that education on these topics can significantly diminish the overall persuasiveness of deepfakes and other online manipulations.

CLICK HERE TO GET THE FOX NEWS APP

The MIT Center for Advanced Virtuality is a good example that provides online media literacy courses for college students and educators. Similar initiatives can help improve media literacy and critical thinking skills downstream with middle school and high school students.

A united front combining technological, legislative and educational efforts is required. This includes increased collaboration among social media and AI companies, policymakers, educators and users alike. The stakes couldn’t be higher.

Source link